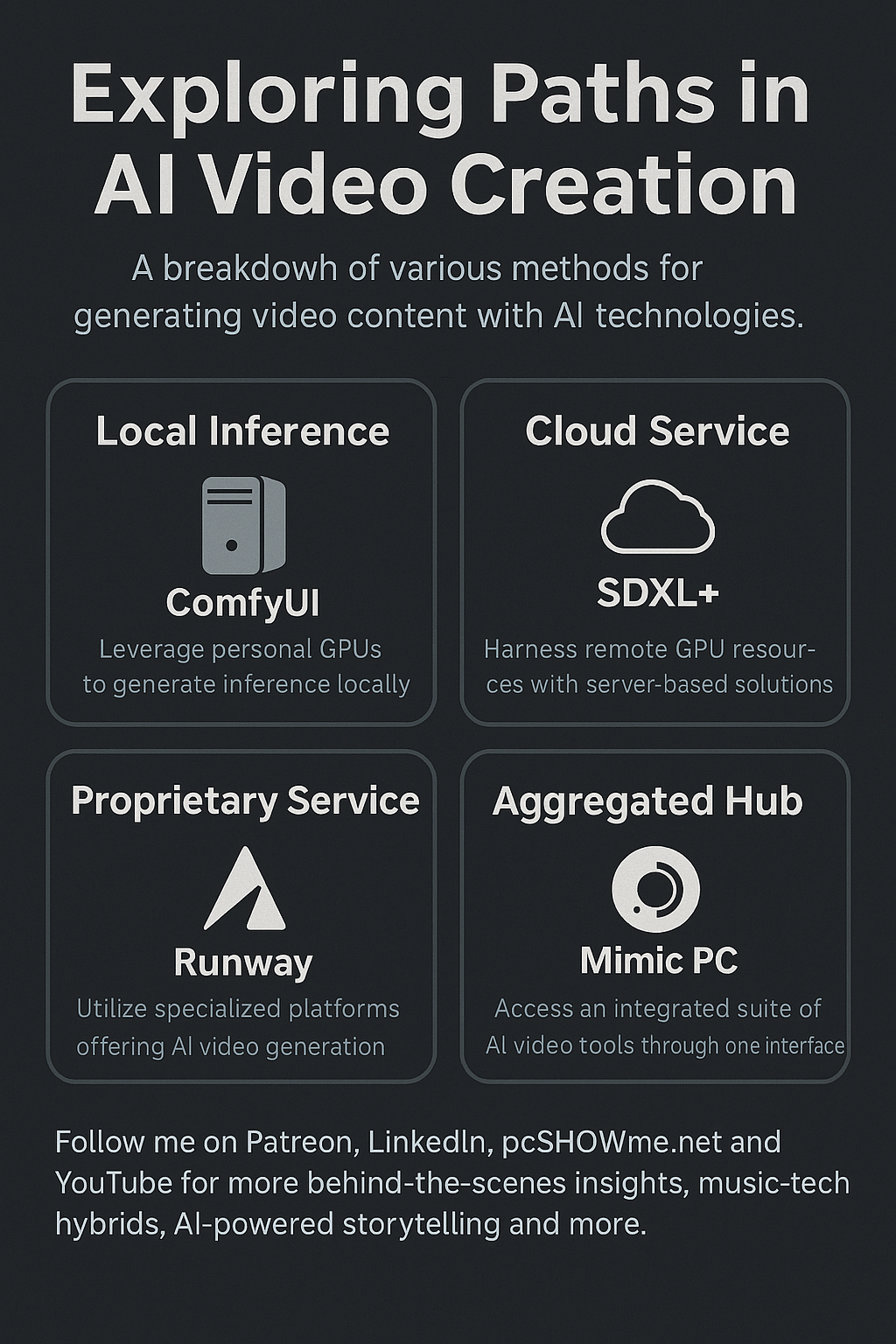

Building Smarter: How I Strategize Local vs Cloud AI Workflows (and Why You Should Too)

In the modern AI creator space, knowing when to run things locally, when to offload to the cloud, and when to use turnkey platforms is not just a smart strategy—it’s the difference between burnout and breakthrough.

As someone balancing projects like Samaritan (my Linux-based modular AI backend) and multimedia production under my pcSHOWme brand, I’ve developed a flexible, power-efficient system that blends the best of each world. Here’s a breakdown of how I think about it—and how you might too.

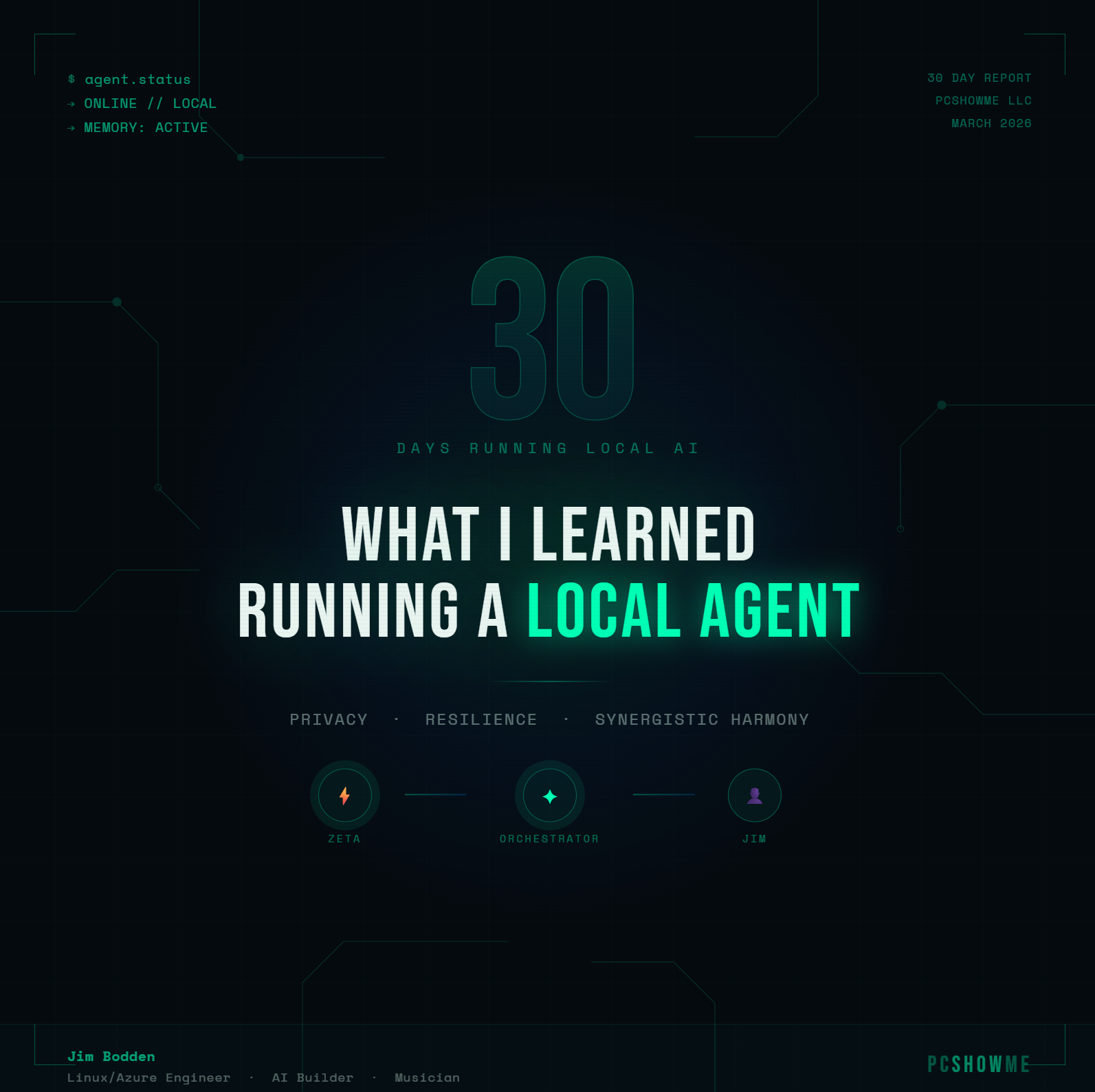

🚀 LOCAL AI: Where the Real Workhorse Lives

Main Tools: ComfyUI, Ollama, local LLM hosting, audio/video processing

Runs on: High-performance workstation (RTX 3080 Ti now, 4090 soon), Ubuntu/Linux

Use When:

- You want full control over assets, privacy, and workflows

- Running ComfyUI pipelines, stable diffusion image/video generation

- Generating assets repeatedly or with multiple workflows

- Speed matters and GPU power is in your hands

Why It’s Powerful:

- No per-minute billing

- You own the latency and the quality

- Local hardware = fewer surprises, more customization

☁️ CLOUD AI: When Scale (or Sanity) Demands It

Main Tools: Azure VMs, RunPod, Lambda Labs, Colab Pro

Runs on: Enterprise GPU (A100s, V100s, etc.)

Use When:

- You need to generate SDXL/HD video or massive image batches

- You’re testing tools that your local GPU can’t handle well

- Running large LLMs with multi-user APIs or dashboard integrations

Why It’s Smart:

- Spin up when needed, shut down when done

- Great for experimentation before investing in hardware

- Ideal for heavy parallel workflows or testing bleeding-edge models

🤖 AGGREGATED CLOUD SERVICES: Plug-and-Play Efficiency

Main Tools: Mimic PC, RunwayML, Replicate, Leonardo.AI

Use When:

- You want quick access to pre-built pipelines

- Need AI results fast, without full stack management

- You’re demoing or testing concepts

Why It Works:

- Time-efficient for experimentation

- No system setup or deep technical skills needed

- Perfect for tutorials, students, or side-projects

🧵 PROPRIETARY SERVICES: Convenience at a Creative Cost

Main Tools: RunwayML, Suno, ElevenLabs, Descript, etc.

Use When:

- You need polished results fast

- You’re okay trading some flexibility for creative speed

- You want to move quickly on non-critical assets (e.g., placeholders, previews)

Why It’s Worth It (Sometimes):

- Reliable, great UX

- Useful for prototyping or placeholder content

- Can be part of your hybrid stack

🔄 HYBRID STRATEGY: My Real-World Flow

I typically:

- Run ComfyUI and local LLMs on my Linux box

- Offload heavy SDXL or long-form AI video jobs to Azure when needed

- Use Mimic PC or Runway for specific visual workflows or experimentation

- Use local-first approach for anything privacy-sensitive or frequently repeated

The key isn’t picking one approach—it’s knowing which tool to reach for based on cost, speed, scale, and control.

🔧 Final Thoughts:

The more projects you juggle, the more critical it becomes to build workflows that serve your mind, time, and mission. With the right mix of local power, cloud elasticity, and service-layer convenience, you’re no longer just using AI—you’re commanding it.

Stay intentional. Stay adaptable. And if you ever feel stuck, just remember: you can always rewire your workflow to serve your creative vision.

Ready to build smarter? Let’s go.

By Jim Bodden (pcSHOWme)

Follow me on Patreon, LinkedIn, pcSHOWme.net and YouTube for more behind-the-scenes insights, music-tech hybrids, AI-powered storytelling and more.